Why alternative data trials fail

And what systematic funds actually want from providers

Most alternative data evaluations fail before a fund even runs a backtest. This is not because the data lacks signal, but because it can't be evaluated with confidence. This article explains why that happens and what providers can do about it.

The reality of selling alt data to quant funds

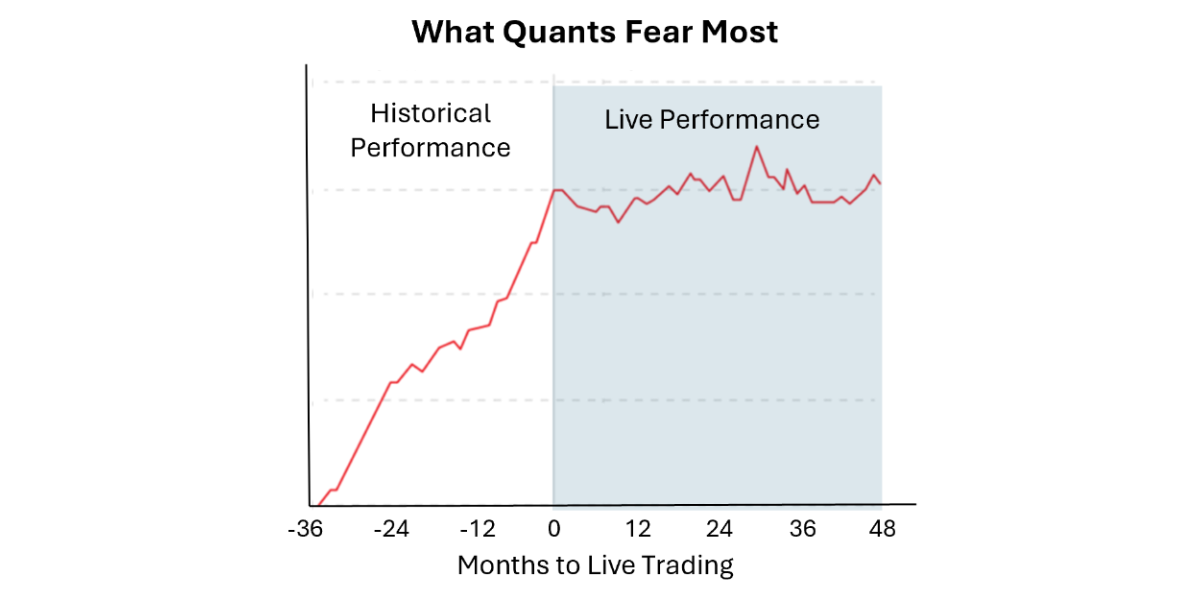

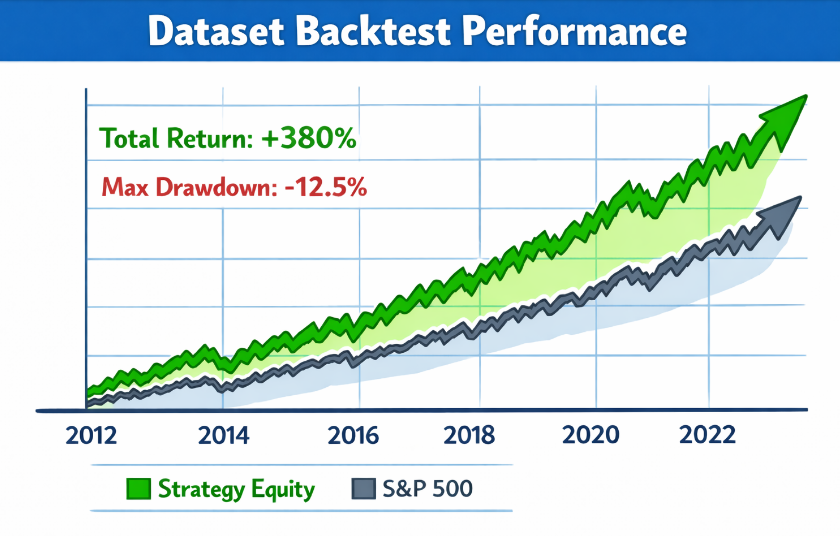

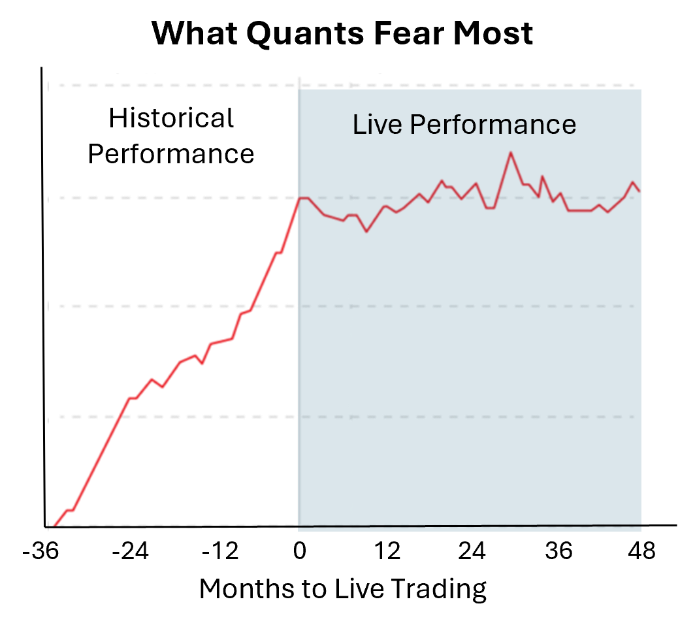

Almost every data provider’s pitch includes a chart that looks like this:

Unfortunately, live results rarely match backtested performance. In our conversations with quant teams at both large funds and small ones, the same pattern comes up repeatedly: promising datasets stall on packaging, not on signal quality.

The quant fund data evaluation funnel

The core challenge for funds who use alternative data isn’t finding signal, it’s developing enough conviction to act on it. If a model cannot be trusted at scale, it is quickly deprioritized.

Many strategies that look promising in-sample fail very expensively in live trading (out-of-sample). Even sound strategies with strong live records experience periods of underperformance, making short-term results difficult to distinguish from noise.

Funds use backtests to evaluate potential trading strategies, and backtests are only as reliable as the historical inputs they depend on. Those inputs are rarely fixed. Methodologies evolve. Records are revised. Companies enter and exit the coverage universe. Timestamps fail to reflect when funds could have traded on the data.

This is why the first questions quant funds ask about third-party data are often about provenance: how methodology has changed over time, how revisions are tracked, and what timestamps mean in practice.

The limits of data trials

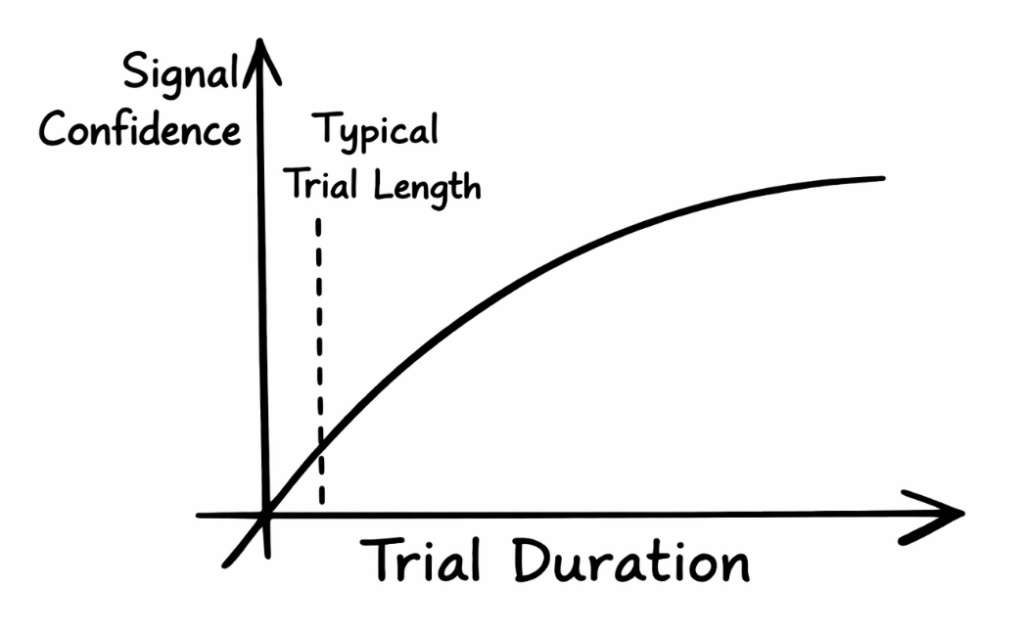

Given these challenges — and many investors’ history of false positives from underperforming strategies — funds use live trials to build confidence in third-party data. But trials are a blunt and expensive tool.

Evaluating a dataset via trial requires scarce engineering, research, and operational resources. For large funds, the direct and indirect (opportunity) cost of a data trial can be substantial – often comparable to the cost of the data itself.

Even when trials occur, they are often too brief to separate signal from noise, causing many datasets to fail trials for reasons unrelated to actual dataset quality.

Why broken causality matters more than messy data

Quant investors are used to working with imperfect data. In fact, cleaning messy data can be a source of competitive advantage. Broken causality, however, cannot be easily repaired and is the core failure mode behind many stalled data evaluations.

Broken causality occurs when an analyst cannot reliably determine which version of the data was available at the point-in-time of a backtested trading decision. Even a dataset of perfect earnings forecasts for public companies may not be usable in a trading context if a fund cannot determine with certainty whether the data was available before the earnings announcement. A one-minute timing lag can make the difference between millions in profit and millions in losses.

The same issue occurs when coverage expands, identifiers are remapped, or backfilled values appear in the data before they were actually known.

Causality is frequently undermined by subtle, seemingly beneficial operations such as historical revisions, methodology updates, versioning, and timestamp adjustments. When causality in third-party data is ambiguous, the chain of cause-and-effect collapses, and investors lose confidence in all derived data and signals.

A recent Glassnode note shows the issue. They reran the same trading strategy on two versions of the same metric — one using revised historical data, one using strictly point-in-time data. The revised version showed a 120% return, the point-in-time version returned just 40%. This isn’t an anomaly — revised data almost always outperforms point-in-time, because lookahead bias flatters the backtest.

This is why funds routinely walk away from promising high-signal datasets without even a trial.

Case study: when good data fails for the wrong reason

You’ve spent months building a dataset of historical sales indicators for large technology companies. The data is well-constructed, professionally maintained, and shows strong backtest performance across multiple historical periods. A mid-sized systematic fund agrees to trial it.

Three months in, the fund’s live results are modest and inconsistent. Their research team asks about historical revisions and methodology changes. You give reasonable answers — but you have no way to let them verify independently. They notice small discrepancies between the historical and live data. You know the discrepancies are benign, but you can’t prove it.

The fund labels the trial inconclusive and passes. You’ve spent months supporting the evaluation, gave away free data access, and got a polite no with no actionable feedback. You don’t know if the signal disappointed or if the fund simply couldn’t develop conviction. Neither do they. And you’re unlikely to get another shot with this fund for years.

Now imagine you could have pointed them to an independently verifiable revision record. The same discrepancies exist, but now the fund can see for themselves that the historical data hasn’t changed.

The fund can now evaluate the dataset using the data’s full historical record rather than a limited trial window. Trial performance is now a small component of the overall purchase decision rather than the critical indicator of live performance.

The trial result is the same — but the conversation, and the fund’s confidence in its own evaluation of the data, is completely different.

If you’re not sure where your dataset falls on these dimensions, there is a simple diagnostic we call the Replay Test.

The Replay Test

Can a buyer quickly reconstruct what your dataset looked like on any past date — including schema, entity mappings, and what changed since then — and begin credible backtesting quickly?

If the answer is “not reliably,” that’s where many evaluations stall. If your dataset passes the Replay Test, you’ve addressed the one of the most common reasons that promising data evaluations fail to convert.

Improved historical defensibility doesn’t change the underlying signal. It changes how confidently buyers can interpret their own research results — and that confidence is often the difference between an inconclusive trial and sustained adoption.

Other practical steps that reduce evaluation friction

In systematic workflows you’re not just selling a dataset; usability and reproducibility often matter as much as the underlying signal.

A few practical tips for providers:

- Make coverage and taxonomy unambiguous. Ticker changes, delistings, and corporate actions create hidden failure modes unless entity mapping is explicit and point-in-time.

- Treat delivery as part of the product. Keep the schema stable. Version changes. Publish a clear change log.

- Be explicit about revisions. If historical values, methodologies or universes can change, say so, and specify what changes, why, and when.

Following these suggestions doesn’t guarantee buyer engagement, but it significantly reduces the chances of stalled evaluations.

At validityBase, we build infrastructure that helps data providers pass the Replay Test and package data to maximize the chance of successful trials.

That includes audit trails for funds to verify what was delivered and when — as well as the packaging, documentation, and delivery infrastructure that funds expect before they’ll commit research resources. The result is shorter evaluation cycles, fewer stalled trials, and a dataset that’s easier for research teams to defend internally.

To assess your own readiness for systematic evaluations, see the one-page checklist we use with providers. Or reach out at trials@vbase.com to walk through it together.

Dan Averbukh is the co-founder and CEO of validityBase, where he works on data reliability and evaluation infrastructure for systematic investing.